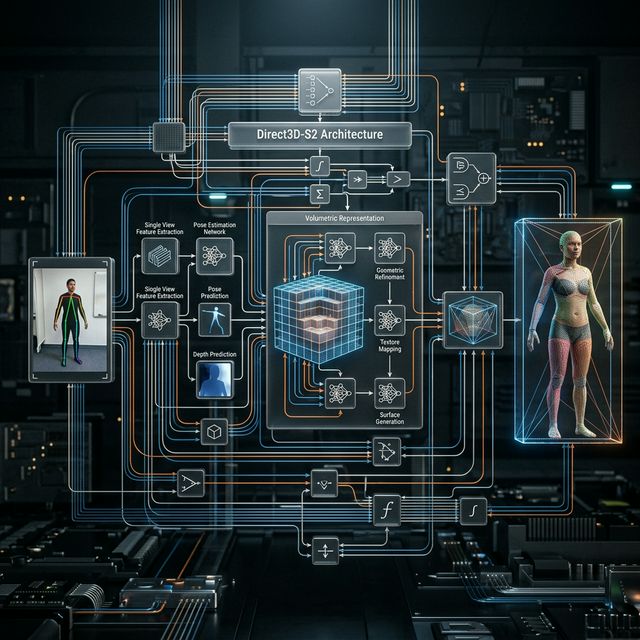

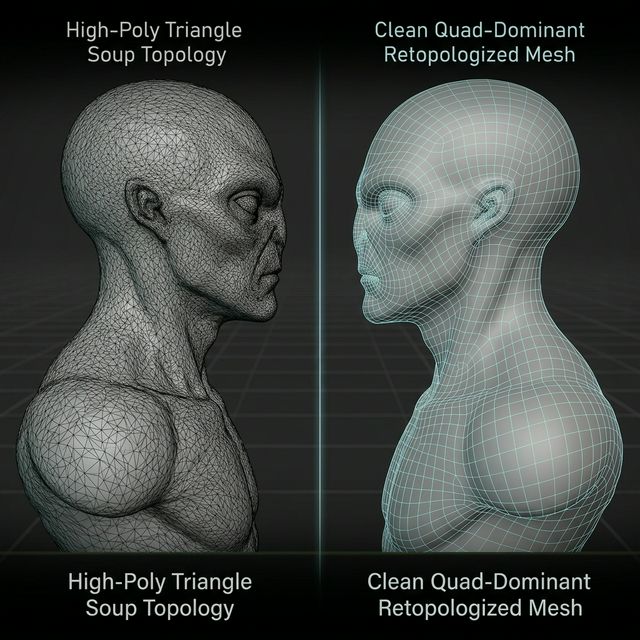

The landscape of three-dimensional content creation is undergoing a tectonic shift. For years, the promise of “AI Image to 3D” was hampered by low-fidelity outputs, often described as “triangle soup” by frustrated technical artists. These early iterations relied on probability-based diffusion that struggled with volumetric consistency and topological integrity. Neural4D (N4D) represents a fundamental departure from these limitations, introducing the Direct3D-S2 architecture and Spatial Sparse Attention (SSA) to create engine-ready assets from single reference photos.

This Neural4D review explores the technical foundations that set it apart from the noise of the current AI boom. We will examine the core reconstruction engine, the material synthesis pipeline, and the practical workflow implications for studios transitioning away from manual retopology.

The Problem with Probability-Based Geometry

To understand why Neural4D is significant, one must first recognize the failure of standard 3D diffusion models. Most consumer AI tools generate geometry by interpreting pixel depth through a “best guess” lens. While this produces attractive browser-based previews, the resulting OBJ or GLB files are often a mess of non-manifold edges and overlapping polygons.

Neural4D solves this by moving from “guessing” to “reconstruction.” Its Direct3D-S2 algorithm does not just project a texture onto a flat mesh; it reconstructs the volumetric essence of the object in a coordinate-aware latent space. This prevents the “melted candle” effect common in lesser tools when the camera moves to the rear of the generated object.

Direct3D-S2: Volumetric Reconstruction at Scale

The heart of N4D is the Direct3D-S2 engine. Unlike traditional photogrammetry, which requires dozens of high-resolution images and perfect lighting, Direct3D-S2 can infer the structural logic of an object from a single 2D input. It achieves this by using a pre-trained library of geometric primitives and mechanical logic.

When you upload a photo of a mechanical asset—for instance, a sci-fi drone or a vintage camera—N4D identifies the hard-surface logic. It understands that a lens is a cylinder and a body is a rectilinear volume. This structural awareness ensures that handles, buttons, and panels are distinct geometric entities rather than just “bumps” in a single mesh.

Spatial Sparse Attention (SSA) and Coherence

A common critique of AI-generated 3D is “jitter” or “hallucinations” in the geometry. If the front of a character has a certain eye shape, the back of the head must logically follow a specific curvature. Neural4D uses Spatial Sparse Attention (SSA) to maintain this 360-degree coherence.

SSA focuses computational resources on the most critical geometric intersections. By ignoring “dead space” in the voxel grid, it can dedicate more detail to the fine-grained areas like hinges, facial features, or intricate mechanical vents. This results in a mesh that is not only visually accurate but also logically sound from a physics perspective.

Quad-Dominant Topology for Technical Artists

One of the most impressive features found in this Neural4D review is the output topology. Most AI tools output decimated triangle meshes that are a nightmare for rigging and skinning. N4D provides an option for quad-dominant topology directly out of the box.

While not a replacement for a human-guided manual retopology for hero characters, the N4D output is surprisingly clean. The edge loops follow the logical flow of the geometry, making it possible to apply subdivision surface modifiers without creating “pinching” or shading artifacts. For environmental props and background assets, this represents a 70% reduction in production time.

PBR Texture Synthesis and 8K Resolution

A 3D model is only as good as its materials. Neural4D does not just “stretch” the input photo over the mesh. It performs a complete PBR (Physically Based Rendering) synthesis. It generates separate maps for Albedo, Normal, Roughness, and Metallic properties.

The AI analyzes the lighting in the input photo and removes “baked-in” shadows—a process known as delighting. This allows the finished asset to be placed in any lighting environment (such as an Unreal Engine 5 Lumen setup) and react naturally to the light sources. The textures can be exported at up to 8K resolution, providing the crispness needed for close-up shots and high-end cinematic work.

Integration: From Browser to Unreal Engine

The utility of Neural4D is maximized in its export capabilities. It supports industry-standard formats including FBX, USDZ, and GLB. During our testing, the USDZ exports maintained their PBR material assignments perfectly when brought into Blender.

The weight of the assets is also manageable. Because N4D understands the “importance” of different geometric areas, it performs an intelligent vertex optimization. A flat surface will have fewer polygons, while a detailed curve will have a higher density. This makes the models ready for integration into mobile AR applications or high-fidelity VR workflows without crushing the performance budget.

Workflow Revolution: Beyond Manual Retopology

For a small indie studio, the cost of a full-time 3D modeler can be a barrier to entry. N4D acts as a force multiplier. Instead of spending 20 hours on the base mesh of a complex prop, a developer can generate the primary volume in minutes and spend those saved hours on custom detailing and gameplay logic.

The speed of iteration is the real “alpha” here. You can test ten different prop designs in the time it would previously take to model one. This encourages creative risk-taking and leads to more cohesive world-building.

Conclusion: The New Standard for AI 3D

This Neural4D review confirms that the era of “good enough” AI 3D is over. We have entered the era of “engine-ready” AI 3D. By combining the volumetric logic of Direct3D-S2 with the sharp focus of Spatial Sparse Attention, Neural4D provides a utility that transcends mere novelty. It is a genuine production tool designed for the rigors of modern game development and VFX.

If you are a technical artist or a studio lead looking to modernize your pipeline, the shift to volumetric AI is no longer optional—it is a competitive necessity.

Ready to skip the manual retopology?

Neural4D is not just an AI image to 3D generator; it is the bridge between 2D conceptualization and 3D realization. The fidelity of its quad-dominant meshes and the accuracy of its PBR texture sets make it the clear leader in the high-resolution 3D generation space.