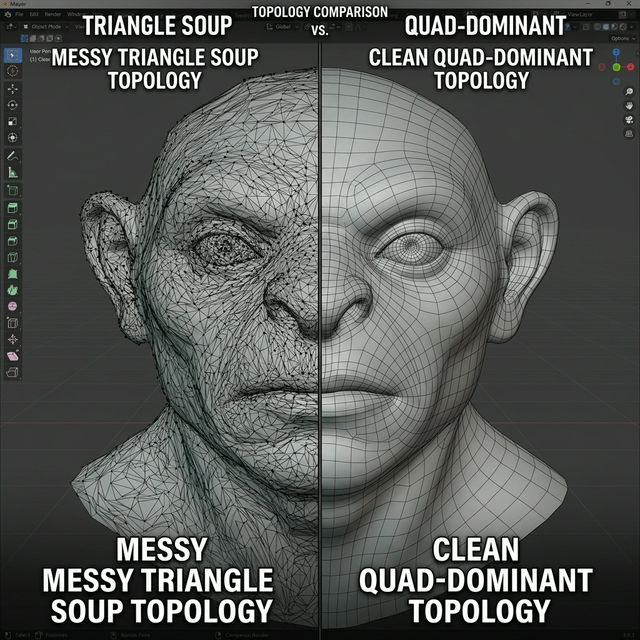

Most current tools for AI Image to 3D Generator: Create High-Resolution 3D Models from Photos act like slot machines. You upload a JPG, wait two minutes, and receive a blob of uneditable geometry. We call this the “Triangle Soup” effect. It looks acceptable in a tiny browser preview, but the moment you import it into Blender or Unreal Engine, the mesh integrity collapses. Neural4D (N4D) changes this dynamic by moving away from probability-based blobs and toward programmatic, volumetric reconstruction.

The “Triangle Soup” Crisis in Modern GenAI

If you’ve spent any time in the indie dev community, you know the frustration. Most AI generators produce non-manifold geometry. These are meshes with holes, self-intersecting faces, and zero regard for edge flow. When you try to rig a character or apply a subdivision surface modifier, the whole thing breaks.

The industry standard for “Accuracy” usually peaks at about 75% for single-view generation. Why? Because most models are guessing what the back of your object looks like based on a 2D dataset. They don’t understand the physical logic of a chair, a mechanical gear, or a human face. They just see pixels and try to extrude them. This leads to high computational overhead and models that require hours of manual retopology before they are even usable in a production pipeline.

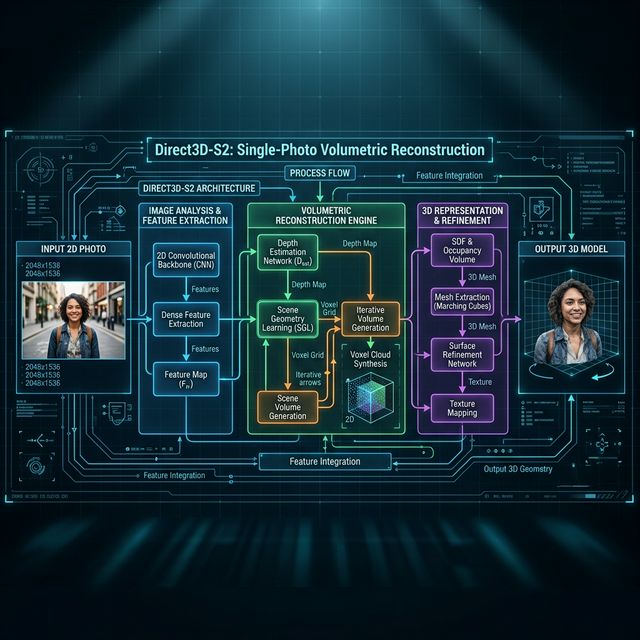

Direct3D-S2: Architecture Over Brute Force

Instead of just throwing more GPUs at the problem, N4D utilizes the Direct3D-S2 architecture. This isn’t just a marketing term. It refers to a specific way of handling spatial data. While competitors use standard diffusion to “guess” depth, Direct3D-S2 uses a programmatic approach to rebuild the volume.

The result is a quad-dominant mesh. For those who aren’t technical artists, here is why that matters: triangles are hard to animate. Quads (four-sided polygons) follow the natural flow of the object’s surface. With N4D, the edge flow is clean enough that you can often use the raw output for background assets without any manual cleanup. This reduces draw calls and makes your asset pipeline significantly faster.

Check out our guide on 3D Horse Model Generator for more insights.

Solving “Dead Shadows” with Spatial Sparse Attention (SSA)

Another massive blocker in the 3D industry is baked-in lighting. Most AI generators “hallucinate” lighting onto the texture. If your original photo had a bright light from the left, the generated 3D model will have a permanent highlight on the left and a permanent shadow on the right. In a game engine, where lighting is dynamic, these “dead shadows” look amateurish and break immersion.

Neural4D solves this with Spatial Sparse Attention (SSA). This mechanism allows the AI to differentiate between the shape of the object and the lighting conditions of the source photo. By focusing on “Native Volumetric Logic”, N4D strips away the lighting data to produce a pure PBR (Physically Based Rendering) workflow.

Why Resolution is a Metric of Logic, Not Pixels

When we talk about “High-Resolution 3D Models”, we aren’t just talking about texture size. High resolution in 3D means geometric fidelity. Can the AI reproduce the sharp edge of a blade or the subtle curve of a lens?

Most probabilistic models smooth everything out. They turn sharp corners into round nubs because the AI is “averaging” the shape. N4D’s SSA mechanism ensures strict adherence to the prompt and the source image. If the photo has a hard edge, the Direct3D-S2 logic creates a hard edge. The mesh is “watertight”, meaning it’s a solid volume ready for 3D printing or complex physics simulations without further adjustment.

Conclusion: The End of the AI Slot Machine

We are moving past the era of “AI as a curiosity” and into “AI as a tool”. If a generator can’t output a clean mesh with separated materials, it isn’t helping your asset pipeline; it’s just a distraction.

Check out our guide on Super Mario 3D Model for more insights.

By leveraging Spatial Sparse Attention and the Direct3D-S2 architecture, Neural4D provides a path to genuinely professional 3D generation. Stop settling for triangle soup and dead shadows. It is time to use a system that understands the geometry it is trying to create.

CTA: Ready to skip the manual retopology? [Internal Link: Neural4D Early Access] and start generating watertight, quad-dominant assets today.

Ready to skip the manual retopology?